Malicious Packages Don't Fit the Vulnerability Intelligence Model

By Jenn Gile, Co-Founder, OpenSourceMalware and VulnCon 2026 speaker

Monday, April 20th, 2026

When a malicious package appears in a public registry, the response motion looks familiar. A record appears in a vulnerability database (like OSV). Security tools fire an alert. Someone opens a ticket. The workflow feels right because it resembles every CVE remediation cycle that came before it.

But somewhere between the alert and the actual response, things start to break down. The data doesn't have what you need. The playbook gives you the wrong instructions. And in some cases, following established vulnerability management practice makes your exposure worse, not better.

This isn't a criticism of the vulnerability intelligence infrastructure. It's an observation that malicious open source packages are a close enough cousin to vulnerabilities that the instinct to handle them the same way is understandable, but different enough that the instinct fails in consequential ways. A square peg and a round hole are both shapes. That doesn't make them compatible.

Malicious Open Source is a Distinct Threat Category

"Malware" covers a lot of ground. This article is specifically about intentionally malicious packages published to public open source registries. That is, packages crafted by attackers and uploaded to npm, PyPI, and similar ecosystems with the intent to compromise whoever installs them. Not accidental vulnerabilities in legitimate packages. Not phishing. Not malware delivered through other vectors.

For the purposes of this piece, a vulnerability is a security bug (typically assigned a CVE), meaning an unintentional flaw introduced by a developer that creates exploitable conditions.

Vulnerability Database Alerts Are Necessary, but Insufficient for Malicious Package Response

When a malicious package is detected, practitioners reach for OSV and similar infrastructure because that's what exists. And those alerts are worth having. Knowing a malicious package exists is the minimum viable starting point.

But consider what happens when you try to act on that information.

Take axios, one of the most widely used JavaScript HTTP clients on earth, downloaded roughly 100 million times per week. On March 30, 2026, two malicious versions (1.14.1 and 0.30.4) were published by a threat actor who had hijacked the account of the primary maintainer. The OSV record (MAL-2026-2307) tells you the package name, a purl, the publication timestamp, a one-line summary, the affected version ranges, and a minimal IOC block.

That record answers the question the CVE data model was built to answer: what is affected, and what version introduced the problem? For a vulnerability, those answers point directly toward remediation: upgrade past the affected version, apply the patch, close the ticket.

Except when it comes to malware, there's no upgrading. With an account takeover like axios, the remediation is to roll back to a non-malicious version and remove the injected dependency plain-crypto-js. And in the case of a “born bad” package that was never legitimate (such as tensorflow-opt, which was live for years), the only option is complete removal.

But that's where the OSV record ends. And it’s exactly where the investigation needs to begin.

Malware and Vulnerabilities Require Fundamentally Different Intelligence

The temptation after recognizing this gap is to ask how vulnerability databases could be improved to handle malicious packages better. That's the wrong question. OSV does what it was designed to do, and it does it well. The problem isn't the quality of the tool. It's that malicious packages are a different shape of problem entirely.

A vulnerability is passive. It sits in code waiting to be exploited under the right conditions. A malicious package is active. It executes on install. A vulnerability has a fixed version. A malicious package is the latest version. A vulnerability targets applications. A malicious package targets the developer and the build environment.

These aren't variations on the same threat. They're different animals. And they need different intelligence infrastructure built around different questions.

Responding to Malware Requires a Separate Category of Threat Intelligence

Once you know a malicious package exists and have removed or rolled it back, the real investigative work begins. Did it execute in your environment? If so, what did it do? Who is behind it, and are other packages at risk? These questions require data that vulnerability databases weren’t designed to capture.

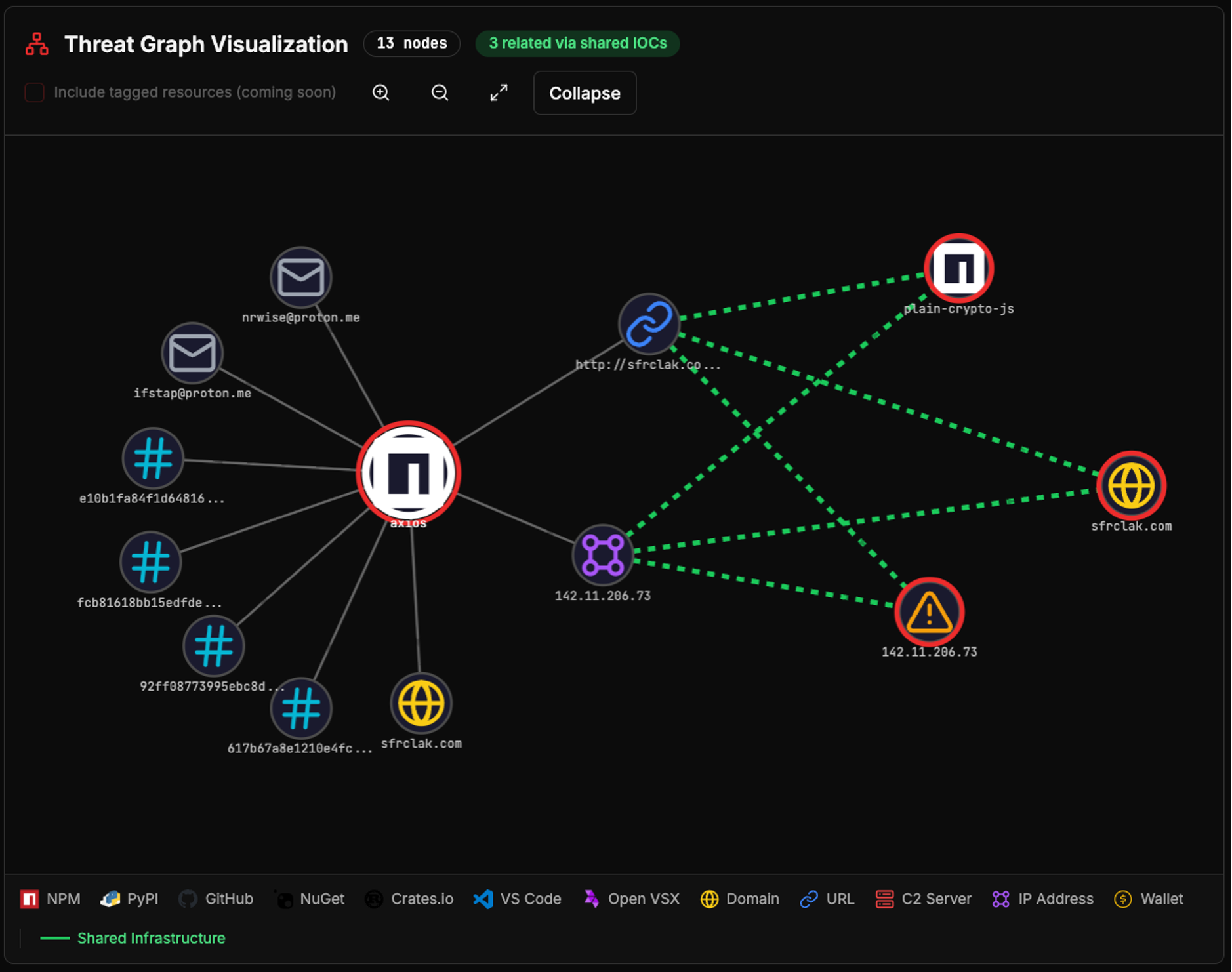

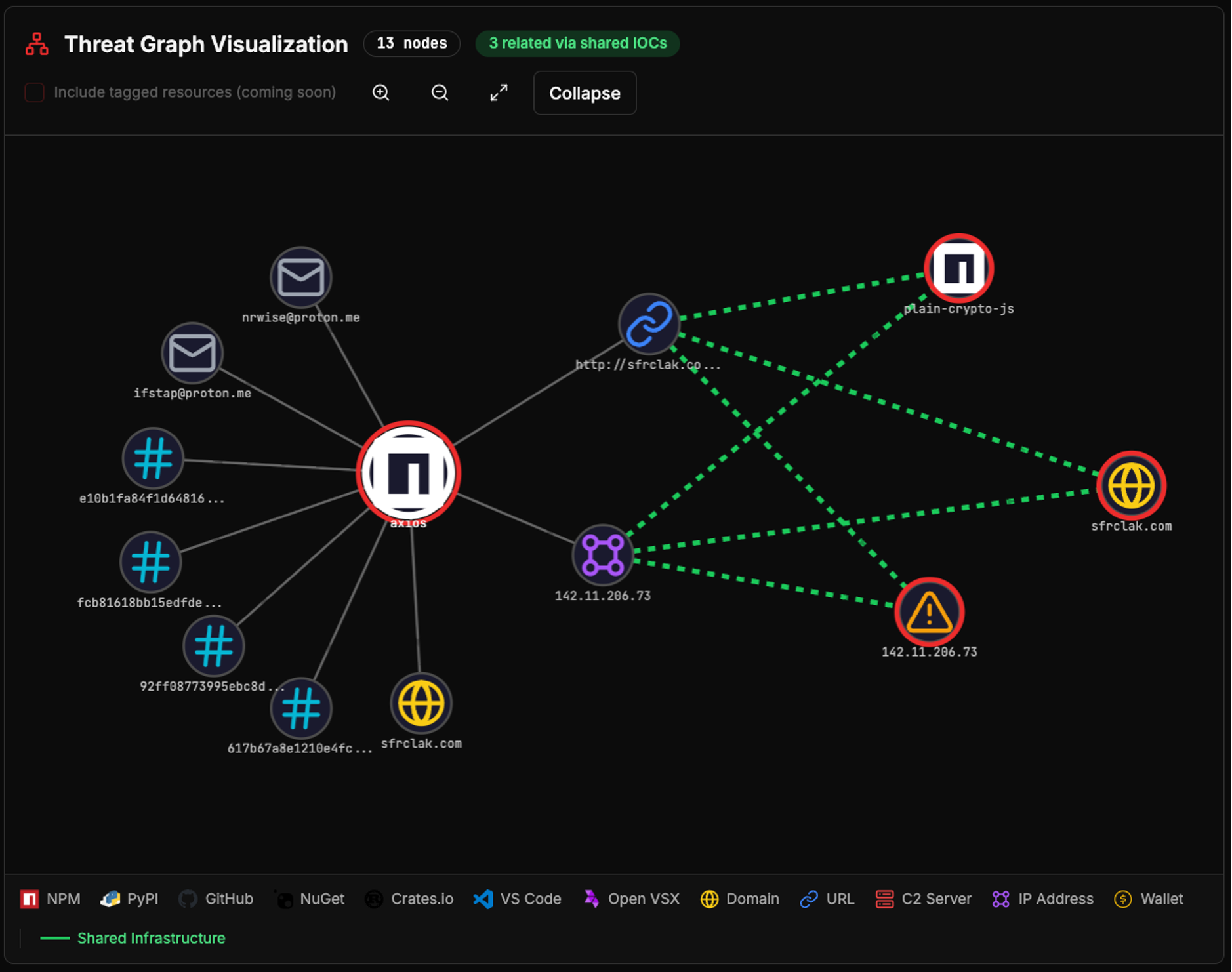

Tools purpose-built for malicious asset intelligence, such as OpenSourceMalware.com, organize this data into categories that map directly to investigative actions. Using axios as an example, we’ll walk through what that looks like in practice.

The Payload: What the Malware Actually Did

The first question after removal is whether the malware executed, and if so, what it did. This requires structured behavioral data, not a prose summary.

Payload data fields:

- Payload file — The specific file (e.g. lib/tools.js) within the package where malicious code lives

- Payload hash — A cryptographic fingerprint confirming whether the artifact you pulled is the malicious one, or a clean version cached upstream

- Behavioral tags — Structured labels (e.g. data-exfiltration, code-execution, obfuscation, infostealer, RCE) describing what the malware does, usable as detection rule inputs

- Specific behaviors — The exact functions and patterns observed (e.g. execSync, child_process.spawn, require('vm'), exec(str)), mapped to specific files within the package

For axios, the payload was setup.js inside the injected dependency plain-crypto-js (hash: e10b1fa84f1d6481625f741b69892780140d4e0e7769e7491e5f4d894c2e0e09). The specific behaviors recorded include a cross-platform remote access trojan (RAT) dropper contacting a C2 server, platform-specific second-stage payloads for macOS, Windows, and Linux, multi-layer obfuscation using reversed base64 and XOR encryption, and post-execution self-deletion designed to frustrate forensic analysis.

Each of these behaviors maps to a detection rule you can write, a log source you can query, and a hypothesis you can test in your environment. MAL-2026-2307 gives you a C2 domain. Structured behavioral tags, payload file identification, and a payload hash tell you what actually ran.

The Threat Actor: Who's Behind the Malware and How to Attribute

Malicious packages don't appear from nowhere. Behind every one is infrastructure that attackers control and often reuse - everything from GitHub repositories to crypto wallets. Identifying that infrastructure is how you attribute activity, track threat actors across campaigns, and anticipate what else they may have touched.

Threat actor data fields:

- Registry user — The account that published the malicious package, a key attribution starting point

- Email addresses — Contact identifiers associated with the actor, often reused across campaigns

- Domains — Attacker-controlled or actor-linked domains, usable as network-layer IOCs

- C2 infrastructure — The specific endpoints (e.g. Discord webhooks, Slack webhooks) where stolen data was sent

For axios, the recorded infrastructure includes the C2 server at sfrclak.com (IP 142.11.206.73), the attacker-controlled email addresses ifstap@proton.me and nrwise@proton.me, and the staging account nrwise used to pre-position the malicious plain-crypto-js dependency 18 hours before the attack. These are not background details. The C2 domain used to exfiltrate data from axios today may be the same infrastructure used in a different campaign tomorrow. If you have those indicators, you can query your network logs now and detect future campaigns before a new threat exists.

This category of data has no equivalent in the CVE model. Vulnerabilities don't have C2 infrastructure. The schema has no field for it.

The Campaign: How The Malware Connects to Broader Activity

Perhaps the most consequential gap between vulnerability intelligence and malicious package intelligence is the concept of campaign linkage. A CVE is by design a discrete, bounded record. A malicious package is often one node in a coordinated campaign.

Campaign data fields:

- Tags — Structured labels describing actor behavior and context (e.g. discord, slack, dprk, typosquatting), useful for pattern matching across campaigns

- Indicators of Compromise — The signals tied to this threat that indicate whether you are dealing with an isolated package or a coordinated attack

- Related malicious packages — Other packages that share infrastructure with this one, defining the true scope of your response

For axios, the OSV record includes a minimal IOC block: one C2 URL, one IP address, one domain. In reality, there are at least 9 IOCs for this campaign, including two that are shared infrastructure for three other malicious assets. Each of those additions maps to a concrete investigative action the OSV record wasn’t designed to support.

The threat actor for the axios compromise is unknown at the time of this writing. But for example the Contagious Interview campaign (known to be delivered by the Lazarus Group) contains at least 58 distinct packages, many which share some infrastructure that quickly tell researchers when a new variation surfaces.

Malicious OSS Packages Need Their Own Intelligence Track

The solution is not to make OSV or other vulnerability databases better at handling malware. Nor is it to create a unified process that works for both. It’s to recognize that malicious packages are a different animal and build intelligence infrastructure that reflects that. Not "what version is fixed?" but "what did it do, where did it phone home, and what else is it connected to?"

The question is not how to make the round hole bigger. It is time to build a square one.

That infrastructure is starting to emerge. For teams looking to build malicious package detection and response workflows, consuming a purpose-built feed is a practical starting point. OpenSourceMalware.com has a free API for new malicious package alerts, and it was designed (as the name implies) specifically to solve this problem.